If you love your data, share it!

On 28 January we piloted our new workshop “How to publish your data” in which we discuss with researchers what decisions to make and what steps to take to publish their data in a public repository.

There can be many reasons why researchers want to publish their data:

- to increase their visibility,

- to boost their citations,

- to reach an audience outside of academia,

- to be FAIR,

- to facilitate a culture change towards sharing,

- or just to meet funder requirements.

We often see that institutions and funders do not have requirements for data preservation that extend beyond 10-15 years, but sometimes data prove to be very valuable for longer, or even because those many years have passed. All the more important to choose a publication platform that not only makes the data easy to find, but has a vison towards sustainable access.

On 28 January we piloted our new workshop “How to publish your data: the benefits and practices of making your research more widely available”. In it we discussed the decisions involved in publishing data, we looked at what makes a dataset FAIR, and we helped participants try out a data upload. The workshop attracted a small group of researchers from across the university and we asked them for feedback on our pilot so that we could develop and improve the workshop and offer it again during 2020.

The Leiden University Libraries' Centre for Digital Scholarship has been offering workshops helping Leiden University researchers to write a data management plan for some years. We started to realise that those people who had written plans some time ago might now be arriving at a point where they would like to publish their data, and therefore we anticipated a growing need for support for this later stage of the process.

The idea for the workshop and the overview of what to cover were developed by staff in the Centre for Digital Scholarship during 2019. Through personal connections and serendipity, we came across a couple of other institutions running similar workshops and so gained some ideas and insight into what might work from looking at their slides.

In January we were ready to pilot the workshop, which we designed with a focus on the method HOW to publish data rather than on the reasons WHY.

Researchers from four different faculties attended, and they shared similar reasons for wanting to publish their data: their funder required it, and when the research project ends they want to share the results with a wider audience for potential re-use, reproducibility, and to be ‘open’. But irrespective of what the drivers may be, there are still similar decisions to be made about how to go about publishing the data.

Contents of the workshop

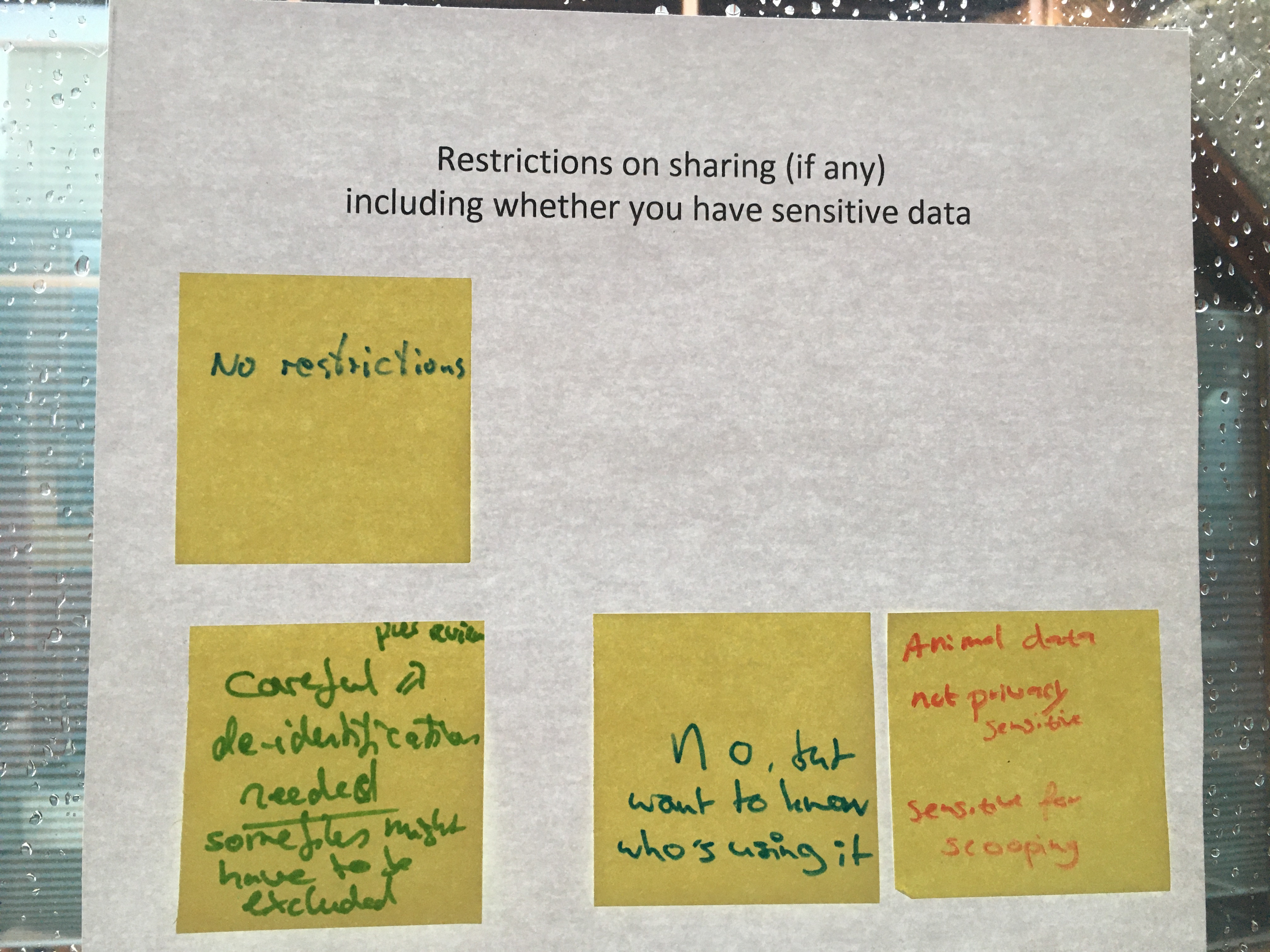

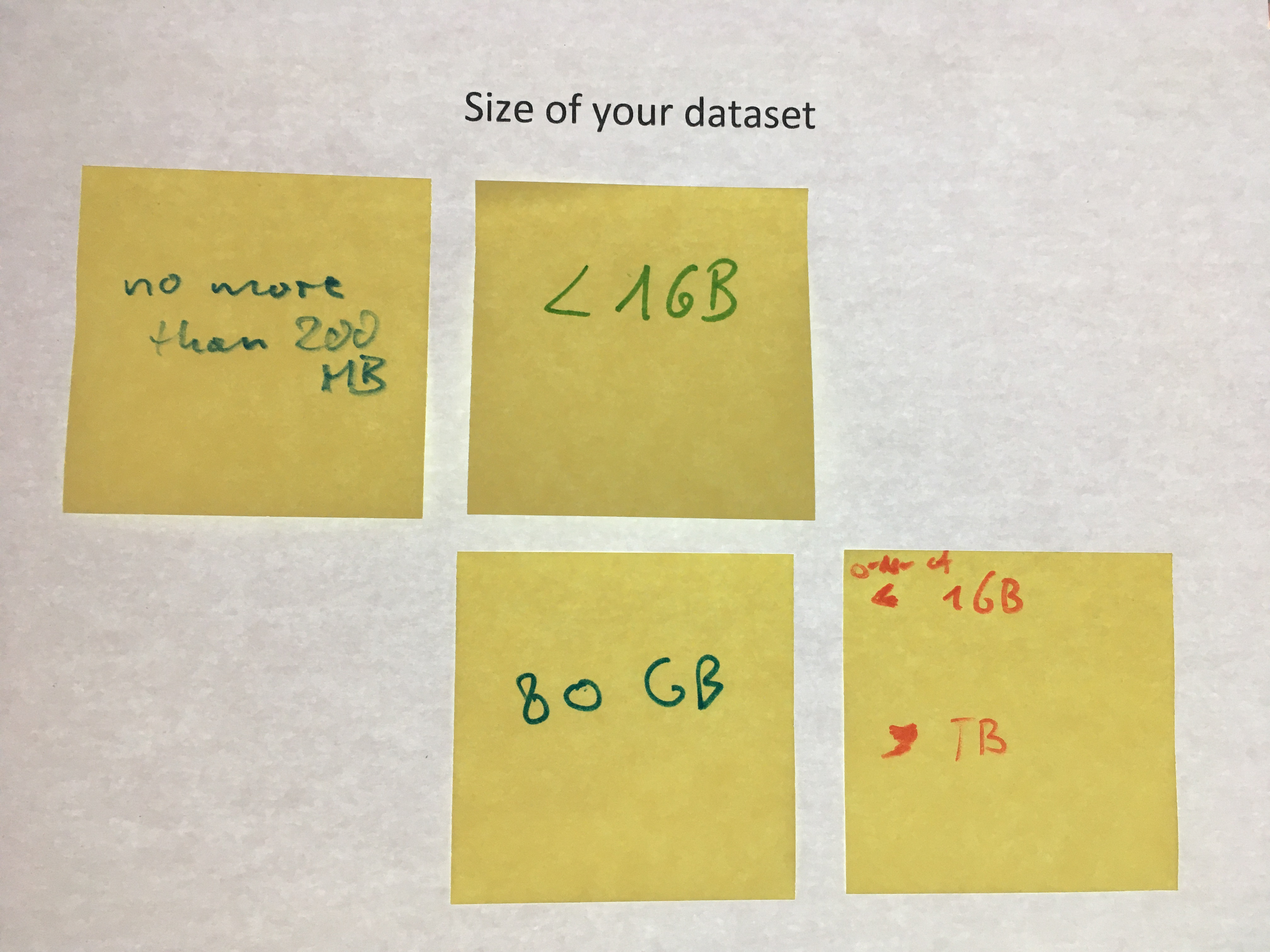

After asking attendees to describe their dataset and its characteristics, we discussed the decisions that need to be made when packing that dataset into something that can be published. This includes decisions about the best file formats to use, the organization of the data, whether there are upload restrictions, or sharing restrictions, and what accompanying materials should be with, or part of, the dataset. One question that arose that we will address next time is how to choose a licence for the data.

After getting an idea of the kind of datasets that the attendees were dealing with, we did a mock upload of a dataset of economic historical data to Zenodo.

Though many sound alternatives are available, we picked this particular data archiving service because Zenodo:

- Is a multidisciplinary data repository – our attendees come from different disciplines;

- Has a ‘community’ for ERC funded projects – 2 of the participants work on ERC projects;

- Has a very straightforward upload form: no rocket science required;

- Provides its dataset with a DOI;

- Allows for integration with Github;

- Allows for updates and versioning;

- Last but not least, has a sandbox environment making it very suitable for practice uploads in workshops.

We finished up by briefly showing other data repositories that could be considered as an alternative to Zenodo, and how to find out about the possibilities using Leiden’s new Research Data Services Catalogue as well as international registries such as re3data.org and FAIRsharing.org.

Our expert on FAIR data, Kristina Hettne, closed the workshop with her analysis of the FAIRness of the dataset. There is no repository that allows for a researcher to meet all the FAIR principles, but the developers are working hard towards achieving this goal. As a researcher you can do your share by focusing on the “Interoperable” and “Reusable” aspects of FAIR.

To conclude:

As a researcher you do a GOOD job if you:

- Fill out as much as possible of the repository fields when you submit your data.

As a researcher you do a GREAT job if you:

- Use metadata standards to record metadata elements.

As a researcher you do an AMAZING job if you:

- Use standard vocabularies to record data elements.

- Save your data in a FAIR interoperable format such as XML or RDF.

With many different ideas across various disciplines about what metadata are necessary (must haves) or useful (nice to haves) to make a dataset understandable, there exist many different ways to annotate. During a project we see researchers documenting according to the standards of their discipline. However, it is when people from outside your subject start using your data that you are triggered to rethink your metadata schemes and your ideas about the minimum requirements to make your data suitable for re-use by someone else.

If you think this is difficult, you are quite right: at the Centre for Digital Scholarship we are very happy to help!

Are you interested in attending the workshop?

With some tweaks we plan to run this workshop again as a regular part of our programme, with the next events planned for 27 March and 23 June 2020. If these don’t suit you, take a look at the Centre for Digital Scholarship calendar for future dates.

And you can register for a place for free!