Demystifying research data interoperability

Of the FAIR principles, the ones relating to interoperability are the most challenging. Ari Asmi of the Leiden University Libraries’ Centre for Digital Scholarship is an interoperability expert and organised a workshop in February 2026, the key highlights of which are illustrated in this blog.

The FAIR principles are the basis of research data management, but not all letters of FAIRness get the same level of love from researchers. Findability and Accessibility are quite well established already, and several mature services exist. Reusability is understandable as the overarching goal, and most of its features are well recognised by anyone who works in and around research.

However, Interoperability is the ugly duckling of the acronym: It is presented as a technical requirement with references to unfamiliar concepts like metadata and ontologies, using highly specific terminology and jargon. What is even worse, the purpose and necessity of interoperability is not very clear to many data professionals or researchers. Why would anyone take the effort to find obscure terms from complex technical vocabularies for our metadata, if the reason for doing this is unknown?

Something had to be done about this, so, in 2025 the Dutch Research Council (NWO) started several projects in Dutch universities to hire experts to tackle the interoperability issue. These initiatives were supported by similar investments in the Thematic Digital Competence Centres (TDCCs). The Leiden and TU Delft initiatives concretised in February 2026 into a workshop called “Demystifying research data interoperability”. More than 30 data professionals from both universities and from the wider Dutch research landscape discussed the meaning, purpose, and methods of data interoperability in a practical sense. The meeting was organised in The Hague campus by both universities’ libraries together with data interoperability experts from all three TDCCs.

The workshop presentations and discussions showed that interoperability information is crucial for finding and reusing research data, particularly via advanced data analysis tools and other automated means. The growing adoption of Large Language Models and other machine learning methods and search tools makes the accurate description of the context, meaning, intended uses, and contents of datasets increasingly important. Providing structured context information via well-defined terms, with linked semantics via ontologies and taxonomies is a way to provide a high level of interoperability, reducing ambiguity, and facilitating the integration of disparate datasets.

However, the importance and feasibility of machine-to-machine information exchange is perhaps not the most pressing issue for many datasets. In the workshop discussions, it was seen as crucial to first identify realistic use cases in order to guarantee the scalability of the effort, and to select the tools to fill these needs. For many groups and researchers, it is much more important to aim towards human-oriented aspects of interoperability first, concentrating on consistently documenting dataset contents in less structured and strict ways: including adding READMEs, keywords, and other context to the datasets.

Different disciplines also have different readiness for interoperability. In some fields, vocabularies and ontologies are highly advanced and in common use, while in others, there are not yet any clear practices or an established culture for the use of such tools. In both cases, research support professionals should be able to provide the needed services, and developing concrete service pathways for these cases is a goal for the future. It is also very important to create a way for data professionals to document and share (in a FAIR way!) the best and worst cases of interoperability, including the tools, vocabularies, and experiences using them.

Although a single workshop is not going to solve the problem of data interoperability, this event was a great first step towards understanding its meaning and necessity. The workshop will help foster future discussions on discipline- and organisation-specific challenges, shared experiences, and required tools for the future. Data interoperability might have lost part of its mystique, but it has gained a lot more practicality in return.

This blog was edited by Tessa de Roo and Pascal Flohr.

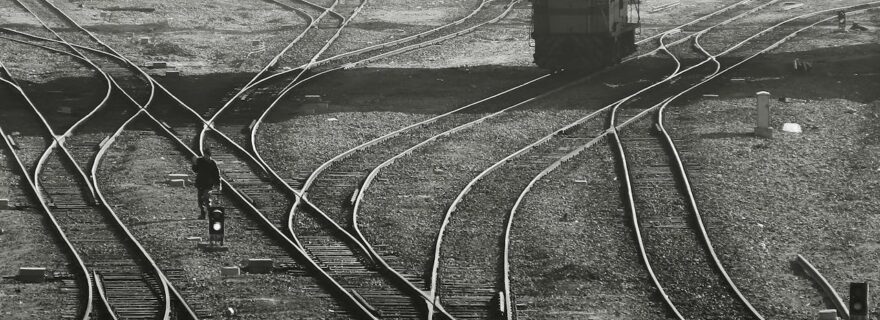

Banner image by Pexels/Behrouz Alimardani.